Memory Hardening: Why Your Keys Shouldn't Survive a Cellebrite Box

Your encrypted messenger is great at encrypting. It's bad at hiding the keys.

Vector does both.

In April 2021, Moxie Marlinspike, Signal's founder, published a blog post that shook the digital forensics industry. He'd gotten hold of a Cellebrite UFED device (the box police plug seized phones into to extract everything on them) and found the software was so sloppily written that he could plant exploits in innocent-looking files that would compromise the Cellebrite box itself when it scanned them.

It was a glorious hack. It was also kind of beside the point.

Because while everyone laughed at Cellebrite's terrible code, nobody was asking the more uncomfortable question: if a Cellebrite box manages to do what it's designed to do (dump your phone's memory), would your Signal keys survive?

Turns out: no. They wouldn't.

Signal keys sit in memory as plain [u8; 32] arrays. Telegram's MTProto auth key is a std::array with no destructor. WhatsApp's keys live in a SQLCipher database whose password is recoverable from RAM. The encryption is strong. The key storage is not.

This is the gap Vector's Memory Hardening was designed to close.

The Quiet Assumption

"End-to-end encryption" means something very specific: messages are encrypted on your device, travel encrypted, and are decrypted on the other device. Nobody in the middle can read them. That part works. That part has worked for years.

But there's a quiet assumption buried in that sentence: the device holding the keys isn't compromised. The moment that assumption breaks (seized phone, malware infection, forensics tool, even a crashed app dumping core), everything unravels.

Most mainstream messengers treat key storage as a solved problem. It isn't. It's just ignored.

- Signal Desktop stores identity keys in a plain JavaScript

Map. At the Rust layer, libsignal'sPrivateKeyderivesCopy, meaning the raw[u8; 32]gets freely duplicated on the stack without being zeroised. Thezeroizecrate is in the workspace. It's just not applied to asymmetric keys. - Telegram Desktop keeps the MTProto auth key as a plain

std::array<gsl::byte, 256>with no destructor. The only memory cleanup happens on DH exchange temporaries, well after the real key has been copied into the unprotectedAuthKey. - WhatsApp is closed-source, so we can't audit its in-memory key handling directly. But Cellebrite openly advertises WhatsApp extraction from seized devices, so the problem is clearly there too.

None of them defend against memory dumps. They rely entirely on the OS keeping their process private. When that assumption breaks, there's no second line. If an attacker gets a snapshot of your phone's RAM, grepping for a 32-byte aligned high-entropy block finds the key in milliseconds.

This isn't theoretical. Here's who's actually paying for it.

Hong Kong, And Beyond

In 2019, during the mass protests in Hong Kong, the Hong Kong Police Force used Cellebrite UFED tools against arrested protesters. Court filings revealed in 2020 showed data from an estimated 4,000 citizens' phones had been extracted this way. They didn't need an exploit. They didn't need to compromise the messengers. They plugged in seized phones and extracted everything: full message histories, social graphs, locations, encryption keys.

Cellebrite announced in October 2020 that it would stop selling its digital-intelligence offerings in Hong Kong and China after significant public backlash. It was a gesture. The devices they'd already sold weren't recalled. The Intercept later reported in August 2021 that Chinese police kept buying Cellebrite tools after the announcement anyway. And Cellebrite continued selling to dozens of other governments whose human rights records aren't meaningfully better.

This isn't the only case where Vector's Memory Hardening would've mattered:

- Serbia (2024): Amnesty International documented how Serbian authorities used Cellebrite and a custom NoviSpy Android spyware to unlock and compromise activists' phones after seizure. A Digital Prison (Amnesty)

- US Border (ongoing): Customs and Border Protection performed over 40,000 device searches in 2022 alone, using Cellebrite and similar tools. No warrant required. EFF border search guide

- Belarus (2020 onwards): Mass arrests of protesters, followed by routine phone checks of people returning from abroad and prosecutions based on 2020 protest photos and channel subscriptions found on seized phones. Human Rights Watch reporting

- Ukraine (2022 to present): Russian forces extracting data from captured civilians' devices at checkpoints during the invasion. Human Rights Watch "We Had No Choice" filtration report

- Dissidents worldwide: Cellebrite is sold to over 60 governments. Wherever there are protesters, journalists, or activists, there's a significant chance a forensics box is what tests their privacy. Not network surveillance. Not law enforcement subpoenas.

For every one of these, if the victim's messenger had stored keys the way Vector does, the forensic extract would yield noise. Not a jackpot.

We're not going to claim Vector solves authoritarianism or the erosion of privacy rights. But for the specific moment when a device is taken, a box is plugged in, and a button is pressed, we're the messenger that makes that moment a dead end instead of an unlock.

These aren't hypotheticals. They're the battleground. Here's how Vector's Memory Hardening defends against the three attack vectors mainstream messengers leave open.

1. The Cellebrite Box

The Simple Version

You get stopped at a border. An officer takes your phone for 30 minutes. When it's returned, nothing seems different. But back at a data centre somewhere, a Cellebrite UFED box just extracted the entire contents: files, messages, location history, and the encryption keys from every messaging app you have installed.

Your messages looked "encrypted" on disk. Sure. But the keys were right there in memory, alongside everything else. Plug the dump into a Cellebrite Physical Analyzer workstation, type in the app name, and out come decrypted transcripts of every conversation you've ever had.

For Vector, that extraction fails. Not because we dodge the dump (we can't), but because the dump itself doesn't contain a recoverable key.

Under the Hood

We don't store the key. We store 4 XOR shares of it, each one 32 random-looking bytes, scattered across 128 indistinguishable arrays. 5 of those arrays contain real share data. The other 123 are pure decoys, OsRng-initialised at startup with identical random values.

All 128 arrays are the same size. They get the same allocation timing. During key storage they all receive random writes simultaneously, so a before/after memory diff doesn't tell you which was "the real write". And the positions where the real shares live are derived from the vault's runtime address, which ASLR changes every single launch.

For a Cellebrite operator analysing the dump: grep for a 32-byte high-entropy block, get hundreds of thousands of hits across our arrays. None of them is the key. All of them look exactly like it.

Reconstructing the real key means brute-forcing the ASLR base address, which is different on every launch. Even with our algorithm in hand (it's open source), each seized device costs hours of single-core compute to crack. Cellebrite sells push-button, fleet-scale extraction. Hours of compute per phone isn't compatible with that.

2. Your RAM, On Disk Or Off

The Simple Version

Two flavours of the same attack:

The swap file trap. You're running Linux on an older laptop. You open Vector alongside a browser tab and a compile job. Memory gets tight, and the kernel quietly swaps some of Vector's pages to disk to free up RAM. You didn't notice. The app kept working. But now your private key, which mainstream messengers leave sitting as a contiguous 32-byte block in memory, just got written to your swap file. And that's where it stays. Disks don't securely erase; the blob remains on physical storage until that specific sector gets overwritten, which on a typical system is weeks, months, or even years away. The file outlives the process, outlives a reboot, and can be read by anyone with access to the disk.

The malware memory scan. You install a sketchy browser extension. It turns out to be a commercial infostealer that harvests crypto wallets, session cookies, saved passwords, and now messaging app keys too. The tool scans other processes' memory, looking for patterns it recognises. For most messengers, that's grep-for-a-32-byte-block and done.

Different attacks, same root cause: your key exists somewhere as a contiguous, recognisable, grep-able blob of bytes. For most apps, that's a disaster nobody talks about. For Vector, there's no such blob to find.

Under the Hood

The key never exists as a contiguous 32-byte blob in memory at rest. Only 4 independent XOR shares, scattered among hundreds of thousands of other random entries across 128 arrays. If a page containing one share hits swap, an attacker reading that swapfile sees one 32-byte high-entropy blob alongside millions of others. Not enough to reconstruct anything. They'd need all 4 shares, from 4 specific (ASLR-randomised) positions, from a process that no longer exists.

For live malware scanning the running process: same problem, different direction. Our vault uses zero compile-time constants. Every multiplier, every iteration count, every derivation step is computed at runtime from ASLR addresses. There's no magic number in our binary that says "the vault is here". The code that reads a key compiles to generic LDR + MUL + AND + EOR + LSR instructions, indistinguishable from the thousands of hash, cipher, and RNG functions in any other part of the binary. Commodity infostealers work by signature-matching known app patterns. Our pattern is: nothing distinctive.

The actual plaintext key only appears on the stack for microseconds during a signing operation, then gets zeroised with volatile writes. The window where it could be swapped, dumped, or scanned is vanishingly small.

We don't use mlock. It sounds counterintuitive, but hear us out: mlock requires elevated permissions on some platforms, doesn't survive hibernation, doesn't cover all relevant pages on Android, and would put a syscall beacon next to the thing we're hiding. Instead, we guarantee the key is never interesting in the first place.

This is the defence we're proudest of. Not because it's clever (it's actually pretty straightforward), but because it's an attack surface nobody else takes seriously, and it affects real users, silently, every day.

3. The Brute-Force Backup

The Simple Version

An attacker gets hold of your encrypted Vector database from a file backup, a forensics disk image, or a compromised cloud sync. They don't have your password, but they have infinite time and a GPU farm.

For a typical app using PBKDF2 with a modest iteration count: each password guess takes microseconds. A committed attacker cracks through a weak password in hours.

Against Vector: it takes years.

Under the Hood

We derive the disk encryption key using Argon2id, which is memory-hard. You can't just throw GPUs at it, because each guess requires hundreds of megabytes of actual RAM. We tune parameters so each guess takes ~1 second on decent hardware. A password dictionary of 100 million words takes ~3 years to brute-force, single-GPU.

Signal Desktop uses PBKDF2. Telegram uses PBKDF2. PBKDF2 is fine for what it does, but it's not memory-hard. You can parallelise the hell out of it on a GPU. Argon2id, by design, refuses to cooperate with that.

This doesn't make your password unbreakable. A 4-character PIN is still a 4-character PIN. But if your password has any meaningful entropy, Argon2id makes the cracking attempt impractical.

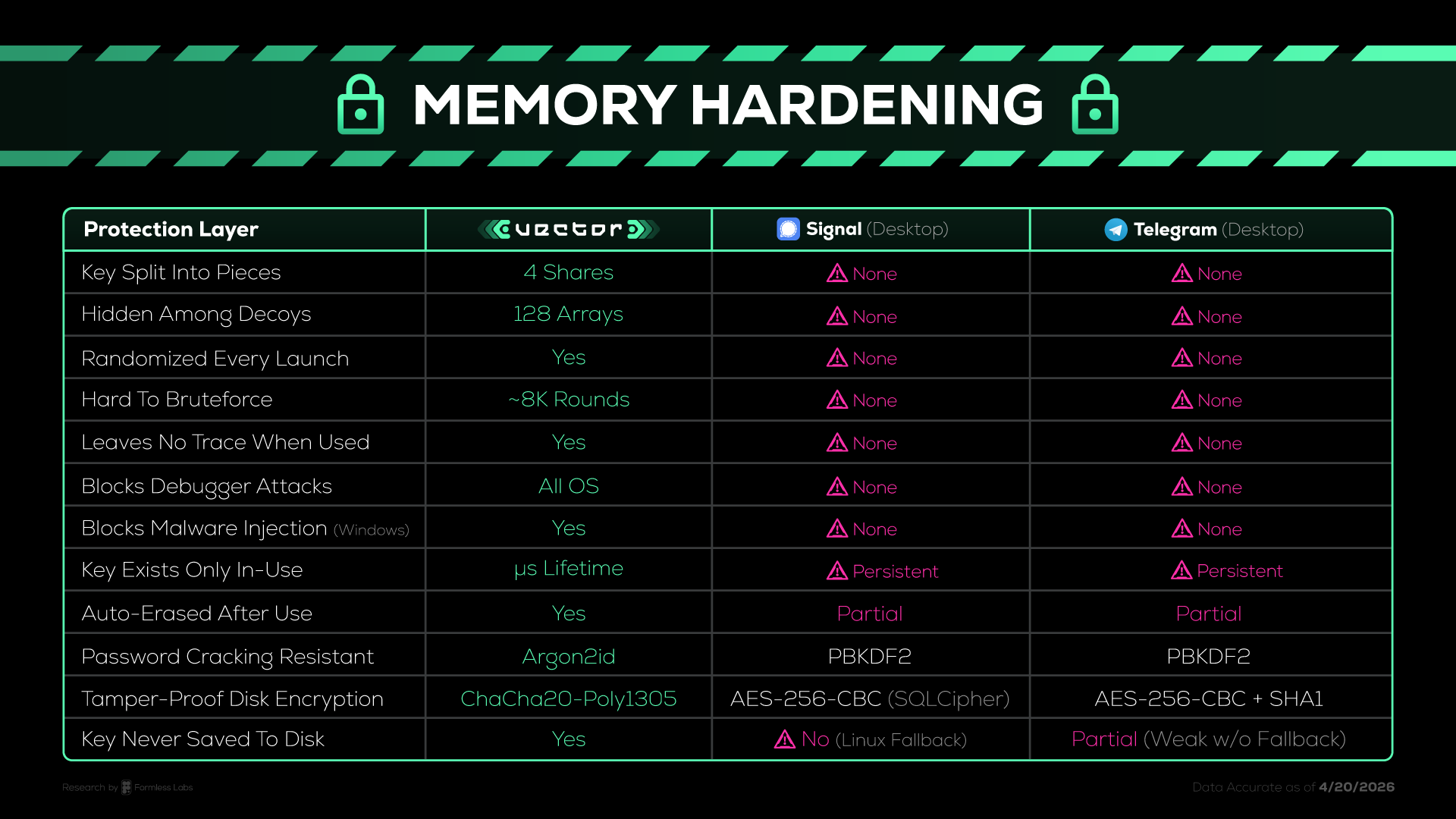

How This Actually Compares

Cells marked "None" aren't being hyperbolic. We checked the code of each: Signal Desktop commit a8118faf, libsignal commit a5e76674, Telegram Desktop commit ba4715a3. All citations are in our memory-security doc. If our analysis is wrong, open an issue. We'll retract.

The Honest Disclaimer

Here's what Memory Hardening doesn't protect against:

- Keyloggers. If something captures your password before it reaches Vector, no amount of vault engineering saves you.

- Kernel rootkits. Root access to the OS trumps userspace protections. A kernel-level rootkit can bypass the vault entirely by modifying Vector's code in memory to intercept keys during the microsecond signing window.

- Same-user code injection with elevated privileges. An attacker who can load their own code into Vector's process before it starts (via environment variables, injected libraries, or patched binaries) can call key retrieval functions directly.

- Human compromise. Social engineering, threats, rubber-hose cryptanalysis. Software can't save you from these. Only operational security can.

We're not claiming Vector is unbreakable. We're claiming it's several orders of magnitude harder to break than what mainstream messengers offer. Specifically against the attack vectors that actually get used in the real world (forensics tools, infostealers, swap file leaks, debugger attach), and which mainstream messengers apparently consider out of scope.

The honest summary: if you have a phone in the hands of a nation-state actor with a fresh kernel exploit, no messenger protects you. But if you have a phone that ends up in a Cellebrite-equipped police van? Or a laptop that crashes and dumps core? Or malware sniffing for 32-byte key patterns in memory? Those are the attacks we protect against. Those are the ones that actually happen to people.

Try It

Memory Hardening is live in Vector v0.3.3 and every release after it. It's on by default. It doesn't require a setting you'd never find. You don't have to know what any of this means. That's the point. We protect you without you knowing it. That's the difference.

- Download Vector: Available for macOS, Windows, Linux, and Android.

- Read the architecture doc: memory-security.md

- Read the commit: security: memory-hardened key vault with anti-debug and zeroize

- Audit us: everything is open source. We publish the algorithm, the source, the threat model, the comparison methodology, and the version hashes of every competitor app we analysed. Check our claims. Break them if you can.